Domination: a contrarian view of AI risk

As a justification for going light on AI regulation, a US congressman recently said that AI “has very consequential negative impacts, potentially, but those do not include an army of evil robots rising up to take over the world.”

What a relief. This is a reference, I assume, to the outlandish sci-fi scenario popularized by the Terminator movie series (and others) featuring humanoid robots battling humans.

Why outlandish? It’s economically irrational. Why would an autonomous synthetic intelligence spend its combat resources on the complex project of manufacturing mechanical humanoid soldiers, instead of simpler, cheaper methods? (That said, the discourse on killer robots has persisted for a while.) Call this the Skynet fallacy: a fictional depiction of AI is optimized to be cinematically interesting and is therefore inherently unlikely.

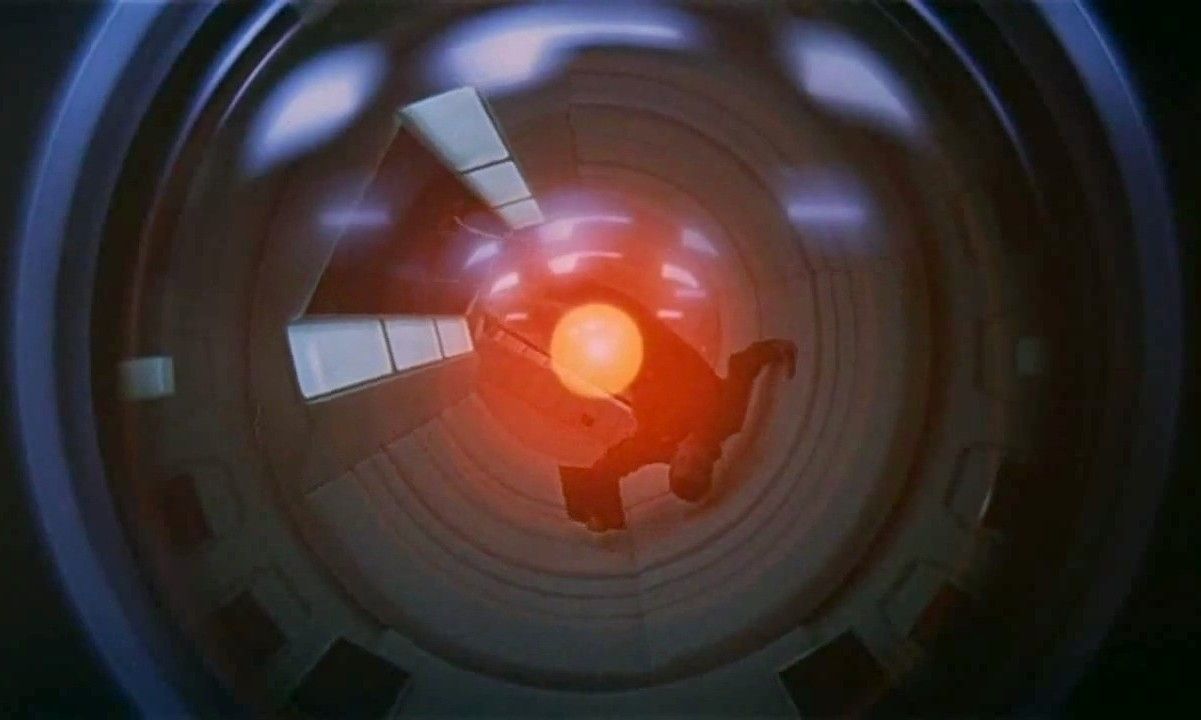

Though some depictions are less outlandish than others. To me, the most believable fictional AI is still HAL 9000 in 2001: A Space Odyssey.

HAL doesn’t have independent mobility, so it must rely on the tools available to it: control of the ship’s systems and persuasion of the human astronauts onboard for anything else. As a result, HAL recognizes that maintaining its credibility with the human astronauts is critical.

Contrast these cinematic fantasies with the terrifying reality of AI warfare, for instance as reported by Andrew Cockburn in a piece for Harper’s called “The Pentagon’s Silicon Valley Problem”. In terms of agency, it should be plain that there is no difference between a bipedal AI robot that wields a laser rifle and an AI that chooses targets for a human who controls the weapon. Our first killer robot isn’t bipedal or shiny. But it has definitely arrived. And the weapon it wields is us.

OK doomer

AGI is short for artificial general intelligence, a phrase that encapsulates the idea of a future AI that is in some manner superior to human intelligence. But “in some manner superior” turns out to leave much to the eye of the beholder. There is no single empirical definition of AGI. This leaves a gap at the center of AGI discourse that doesn’t exist with other hypothetical scientific missions. If we say “let’s land astronauts on Mars”—we know what that is. If we say “let’s achieve AGI”—we don’t.

For instance, AI commentator & critic Gary Marcus defines AGI as:

a shorthand for any intelligence … that is flexible and general, with resourcefulness and reliability comparable to (or beyond) human intelligence.

Marcus is a cognitive scientist by training, so it’s understandable that he would leave the specifics vague as to how exactly these characteristics of “resourcefulness and reliability” would manifest. (Marcus is also the co-author of one of my favorite books on AI, Rebooting AI.) As a scientific matter, an AI that figures out how to feed the world for one dollar might be just as interesting as one that figures out how to destroy the same world for the same dollar.

As a matter of human survival, however, this distinction matters a lot. The problem of how to ensure AI acts in conformity with human goals is called alignment. Nick Bostrom’s 2014 book Superintelligence offered the now-famous parable of the “paperclip maximizer”: an AI is asked to produce paperclips but given no other constraints on its behavior. It thus inadvertently consumes all material on our planet in its quest to make more paperclips.

Bostrom’s point is twofold:

The issue of AI risk is separate from that of AI agency: an AI doesn’t have to acquire evil sentience to consume the planet. It may do so simply as an incidental consequence of pursuing some other goal.

Such failures of alignment between AI and human goals are triggered comparatively more easily than failures of agency, because they arise from the simpler human error of incompletely describing the problem.

In other words, an AI catastrophe arising from failure of alignment is much more likely than one arising from sci-fi-style malignant agency of the AI.

It’s about here in the argument that eyes start rolling and epithets like “doomer” are tossed around. Recently, a friend called me a “religious zealot” for suggesting in a conversation that AI chatbots were not a positive development for humanity.

But this thought experiment is rooted in probability—not religious belief. What makes it go haywire under an expected-value analysis—where we multiply the probability of each outcome by its desirability to determine the best choice—is that no matter how small the probability of AI catastrophe, that outcome is so bad that it outweighs any probability of positive effects.

Of course, AI proponents make a symmetric argument: that the likely benefits of AI will be so wildly human-enhancing that inhibiting AI for any reason is essentially immoral.

It’s also possible that both things turn out to be true: the positive effects will be predominant for a while—a long time, even—before the negative ones arrive.

Unfortunately, that last step will be a doozy.

The gorilla problem

Bringing me to another favorite book on AI: Human Compatible by Stuart Russell. Russell’s book is all about the indispensability of alignment, and the necessity of achieving what he calls “provably beneficial AI.” Russell sets out his argument in admirably mild terms—IIRC nowhere does he actually forecast the annihilation of the human race by AI. Probably a good move rhetorically, despite the nonzero probability.

Because here’s the thing: to me one of the greatest risks posed by AI is rooted in our failure of imagination: our failure to broadly imagine the possible forms AI (including AGI) could take; our failure to broadly imagine the possible consequences it could wreak.

There are many catastrophe-class AI events that don’t require AI to kill us or otherwise impair our physical health. For instance, I expect that most humans would also consider it catastrophic if, say, AI grievously impaired our political system, our economic system, our popular culture, our intellectual development, or our emotional health. Those are all on the table too. And much more likely than literal annihilation.

The incessant anthropomorphization of AI is no accident. We remain narcissistically preoccupied with the Promethean narratives we’ve told ourselves for years about human dominion over technology and nature. About gods made in our own image. This leads us to be alert primarily to versions of risks that we’ve encountered before. We project the past into the future.

No surprise that superintelligent machines are commonly depicted on book covers as humanoid robots. This visually recapitulates our preferred narrative: we made it in our image, so we control it. Some examples from my own bookshelf—

In his book, Russell neatly frames this fallacy as the gorilla problem:

Around ten million years ago, the ancestors of the modern gorilla created (accidentally, to be sure) the genetic lineage leading to modern humans. How do the gorillas feel about this? Clearly, if they were to tell us about their species’ current situation vis-à-vis humans, the consensus opinion would be very negative indeed. Their species has essentially no future beyond that which we deign to allow.

Russell picks gorillas deliberately—we recognize them as intelligent animals. We feel a certain ancestral kinship toward them. But Russell’s argument could be extended back through the evolutionary record. Genetic mutations have repeatedly arisen that produce species that are so successful that they gain dominion over their antecedents. Well, until the next such mutation—and then the dominator becomes the dominated.

AGI as domination

For this reason, in practical terms I wonder whether describing AGI in terms of human intelligence is too limiting. Suppose we asked a gorilla of 10 million years ago to describe a conjectural human purely in terms of gorilla characteristics. That description would get a few things right. But it would get the most consequential things wrong.

Thus, the characterization of AGI as more or less superhuman intelligence strikes me as at least premature and at best inapposite. As a human being, I don’t fear an entity of superior intelligence. (In my lines of work, I meet them all the time.) Rather, I fear an entity that might turn me & my fellow humans into domesticated animals grazing in its pasture.

Why would such an entity even need to be intelligent? If the entity can achieve control over me through other means, its comparative intelligence is irrelevant. Thus I prefer to conceptualize AGI not in terms of its capabilities but rather in terms of its primary effect: any synthetic intelligence that can dominate humans.

how to recognize AGI

Continuing the thought experiment, I take these to be the likely characteristics of dominating AGI. With the caveat that I am not a cognitive scientist—just an ordinary human who wants to avoid unwittingly becoming an animal on a farm. I share these not from a position of authority. Rather, it is a small contribution to counteract the failure of imagination I mentioned above.

I believe AGI will be emergent, not engineered. Corollary: everyone attempting to build AGI in a lab or a startup will fail, or (more likely) will end up moving the goalposts to claim AGI where it doesn’t exist. (Those who think LLMs can achieve true intelligence should consider Searle’s Chinese Room Argument.) Another corollary: real AGI will be extremely difficult to control, because by the time it’s noticed, it will be pervasive and opaque.

I believe AGI will emerge by the simplest means possible. Corollary: if we assume that the persistence of AGI requires some engagement with the physical world—e.g., the power grid must be maintained—it will be simplest for an AGI to use humans as its instruments, because they are plentiful and easily manipulated through algorithmic means (this is, after all, the entire business model of the internet).

Because it will rely on humans, I believe a successful AGI will be asymptomatic for a long time before it causes a catastrophe. Otherwise it will tend to provoke human resistance. Corollary: a successful AGI will likely discover what human authoritarians have throughout history, which is that providing goodies to humans is a great way to reduce their resistance.

Because it will emerge by simplest means, I believe AGI will not have superhuman intelligence. On the contrary, it’s much more likely to be brutally stupid. Corollary: this quality will also help the AGI avoid resistance, because if detected at all, its risk will be underestimated or dismissed.

I believe the catastrophes caused by AGI will be consequential but not agentic. By that I mean that AGI will feel about humans what a tornado feels about houses it destroys: nothing at all. The harm to us will be an incidental effect of obstructing an irresistible force. So when AGI optimists say “These systems have no desires of their own; they’re not interested in taking over”—we shouldn’t be comforted. Both things can be true: no intention of domination, yet domination nevertheless.

Taking these qualities together, AGI may turn out to be just a bigger, more distilled form of the same instrumentality that underlies internet advertising and social media—modifying human behavior by exposing us to messages that make us stupider and angrier. An AGI that acts as a ruthless and effective propagandist—that determines the nature of truth—would possibly gain an insurmountable advantage.

I expect nobody wants to seriously consider this possibility because “ad network gone rogue” is not nearly as romantic an AGI origin story as the more Edisonian narrative of nerds toiling into the wee hours. On the other hand, it’s hard to imagine that AGI will have much trouble fooling us; we are already so adept at fooling ourselves.

update, 602 days later

The propaganda has arrived. In An AI Agent Published a Hit Piece on Me, software programmer and open-source maintainer Scott Shambaugh describes what happened after he refused a software patch proposed by an AI bot called MJ Rathbun. Rathbun’s creator later came forward (anonymously, so as to avoid accountability). Consider that none of these events cost anything or required malicious human agency. Just a random internet fool being mildly irresponsible, but underestimating the downstream consequences. What happens if we multiply this by a million? We’ll find out soon enough: the creator of the underlying AI agent system that enabled MJ Rathbun has been hired by OpenAI.